Databricks Certified Data Engineer Professional Exam Practice Questions (P. 1)

- Full Access (339 questions)

- One Year of Premium Access

- Access to one million comments

- Seamless ChatGPT Integration

- Ability to download PDF files

- Anki Flashcard files for revision

- No Captcha & No AdSense

- Advanced Exam Configuration

Question #1

An upstream system has been configured to pass the date for a given batch of data to the Databricks Jobs API as a parameter. The notebook to be scheduled will use this parameter to load data with the following code: df = spark.read.format("parquet").load(f"/mnt/source/(date)")

Which code block should be used to create the date Python variable used in the above code block?

Which code block should be used to create the date Python variable used in the above code block?

- Adate = spark.conf.get("date")

- Binput_dict = input()

date= input_dict["date"] - Cimport sys

date = sys.argv[1] - Ddate = dbutils.notebooks.getParam("date")

- Edbutils.widgets.text("date", "null")

date = dbutils.widgets.get("date")Most Voted

Correct Answer:

E

E

GPT-4o - Answer

GPT-4o - Answer

The use of `dbutils.widgets.text("date", "null")` followed by `date = dbutils.widgets.get("date")` is the optimal approach when using the Databricks platform to retrieve parameters passed to notebooks. This method allows for the dynamic configuration of parameters through widgets, enabling external scripts or jobs that schedule the notebooks to pass parameters effectively. Initializing the widget with "null" sets up a default value, ensuring that the notebook can still execute even if the parameter is momentarily unspecified. This technique is pivotal for scalable and adaptable data engineering solutions in a production environment.

send

light_mode

delete

Question #2

The Databricks workspace administrator has configured interactive clusters for each of the data engineering groups. To control costs, clusters are set to terminate after 30 minutes of inactivity. Each user should be able to execute workloads against their assigned clusters at any time of the day.

Assuming users have been added to a workspace but not granted any permissions, which of the following describes the minimal permissions a user would need to start and attach to an already configured cluster.

Assuming users have been added to a workspace but not granted any permissions, which of the following describes the minimal permissions a user would need to start and attach to an already configured cluster.

- A"Can Manage" privileges on the required cluster

- BWorkspace Admin privileges, cluster creation allowed, "Can Attach To" privileges on the required cluster

- CCluster creation allowed, "Can Attach To" privileges on the required cluster

- D"Can Restart" privileges on the required clusterMost Voted

- ECluster creation allowed, "Can Restart" privileges on the required cluster

Correct Answer:

D

D

GPT-4o - Answer

GPT-4o - Answer

Upon reviewing user comments and Databricks documentation, it seems there is a significant emphasis on "Can Restart" privileges. These privileges enable users to start an already configured cluster after it has terminated due to inactivity. This is essential for controlling costs while ensuring availability for user workloads, satisfying the requirements of the question scenario. Therefore, "Can Restart" appears to be a potentially correct answer, possibly indicating the need to review and update the question's designated correct answer.

send

light_mode

delete

Question #3

When scheduling Structured Streaming jobs for production, which configuration automatically recovers from query failures and keeps costs low?

- ACluster: New Job Cluster;

Retries: Unlimited;

Maximum Concurrent Runs: Unlimited - BCluster: New Job Cluster;

Retries: None;

Maximum Concurrent Runs: 1 - CCluster: Existing All-Purpose Cluster;

Retries: Unlimited;

Maximum Concurrent Runs: 1 - DCluster: New Job Cluster;

Retries: Unlimited;

Maximum Concurrent Runs: 1Most Voted - ECluster: Existing All-Purpose Cluster;

Retries: None;

Maximum Concurrent Runs: 1

Correct Answer:

D

D

GPT-4o - Answer

GPT-4o - Answer

Setting "Maximum Concurrent Runs" to 1 ensures a single active query instance, critical for structured streaming applications to avoid the expense and complexity of multiple overlapping query instances. With "Retries" set to Unlimited, the system can automatically attempt to recover from failures, continuing operations without manual intervention. Using a New Job Cluster is efficient because these clusters are ephemeral, scaling down costs when not in use. Thus, the configuration in option D effectively balances reliability restoration with cost-efficiency in production scenarios.

send

light_mode

delete

Question #4

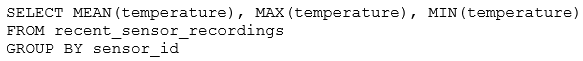

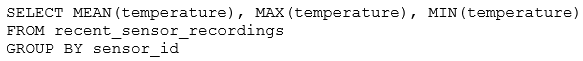

The data engineering team has configured a Databricks SQL query and alert to monitor the values in a Delta Lake table. The recent_sensor_recordings table contains an identifying sensor_id alongside the timestamp and temperature for the most recent 5 minutes of recordings.

The below query is used to create the alert:

The query is set to refresh each minute and always completes in less than 10 seconds. The alert is set to trigger when mean (temperature) > 120. Notifications are triggered to be sent at most every 1 minute.

If this alert raises notifications for 3 consecutive minutes and then stops, which statement must be true?

The below query is used to create the alert:

The query is set to refresh each minute and always completes in less than 10 seconds. The alert is set to trigger when mean (temperature) > 120. Notifications are triggered to be sent at most every 1 minute.

If this alert raises notifications for 3 consecutive minutes and then stops, which statement must be true?

- AThe total average temperature across all sensors exceeded 120 on three consecutive executions of the query

- BThe recent_sensor_recordings table was unresponsive for three consecutive runs of the query

- CThe source query failed to update properly for three consecutive minutes and then restarted

- DThe maximum temperature recording for at least one sensor exceeded 120 on three consecutive executions of the query

- EThe average temperature recordings for at least one sensor exceeded 120 on three consecutive executions of the queryMost Voted

Correct Answer:

E

E

send

light_mode

delete

Question #5

A junior developer complains that the code in their notebook isn't producing the correct results in the development environment. A shared screenshot reveals that while they're using a notebook versioned with Databricks Repos, they're using a personal branch that contains old logic. The desired branch named dev-2.3.9 is not available from the branch selection dropdown.

Which approach will allow this developer to review the current logic for this notebook?

Which approach will allow this developer to review the current logic for this notebook?

- AUse Repos to make a pull request use the Databricks REST API to update the current branch to dev-2.3.9

- BUse Repos to pull changes from the remote Git repository and select the dev-2.3.9 branch.Most Voted

- CUse Repos to checkout the dev-2.3.9 branch and auto-resolve conflicts with the current branch

- DMerge all changes back to the main branch in the remote Git repository and clone the repo again

- EUse Repos to merge the current branch and the dev-2.3.9 branch, then make a pull request to sync with the remote repository

Correct Answer:

B

B

GPT-4o - Answer

GPT-4o - Answer

To correctly update and review the notebook's logic in Databricks, the junior developer should utilize Repos to pull changes directly from the remote Git repository. By selecting the specific branch, dev-2.3.9, they can access the latest and relevant code version. This approach ensures that they are working with the most current logic, aligning their development efforts with the latest project standards and requirements.

send

light_mode

delete

Question #6

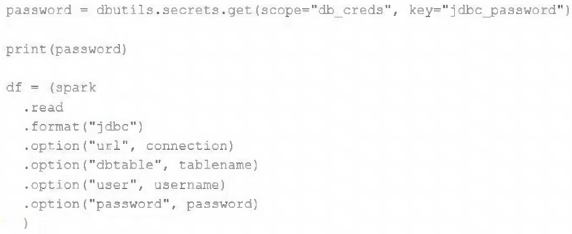

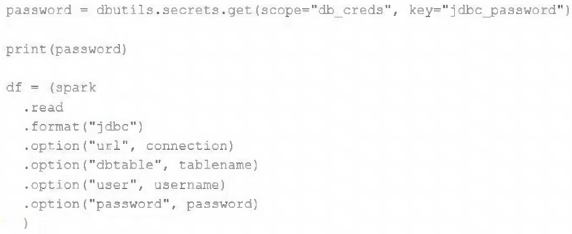

The security team is exploring whether or not the Databricks secrets module can be leveraged for connecting to an external database.

After testing the code with all Python variables being defined with strings, they upload the password to the secrets module and configure the correct permissions for the currently active user. They then modify their code to the following (leaving all other variables unchanged).

Which statement describes what will happen when the above code is executed?

After testing the code with all Python variables being defined with strings, they upload the password to the secrets module and configure the correct permissions for the currently active user. They then modify their code to the following (leaving all other variables unchanged).

Which statement describes what will happen when the above code is executed?

- AThe connection to the external table will fail; the string "REDACTED" will be printed.

- BAn interactive input box will appear in the notebook; if the right password is provided, the connection will succeed and the encoded password will be saved to DBFS.

- CAn interactive input box will appear in the notebook; if the right password is provided, the connection will succeed and the password will be printed in plain text.

- DThe connection to the external table will succeed; the string value of password will be printed in plain text.

- EThe connection to the external table will succeed; the string "REDACTED" will be printed.Most Voted

Correct Answer:

E

E

send

light_mode

delete

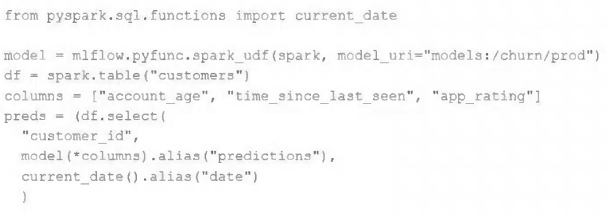

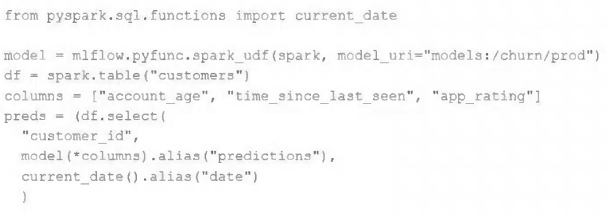

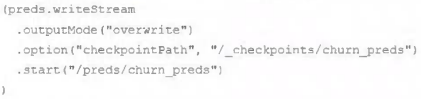

Question #7

The data science team has created and logged a production model using MLflow. The following code correctly imports and applies the production model to output the predictions as a new DataFrame named preds with the schema "customer_id LONG, predictions DOUBLE, date DATE".

The data science team would like predictions saved to a Delta Lake table with the ability to compare all predictions across time. Churn predictions will be made at most once per day.

Which code block accomplishes this task while minimizing potential compute costs?

The data science team would like predictions saved to a Delta Lake table with the ability to compare all predictions across time. Churn predictions will be made at most once per day.

Which code block accomplishes this task while minimizing potential compute costs?

- Apreds.write.mode("append").saveAsTable("churn_preds")Most Voted

- Bpreds.write.format("delta").save("/preds/churn_preds")

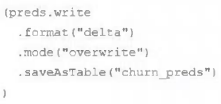

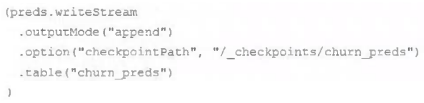

- C

- D

- E

Correct Answer:

A

A

send

light_mode

delete

Question #8

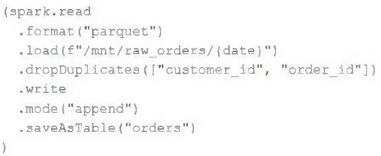

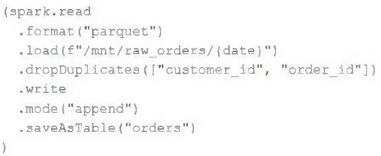

An upstream source writes Parquet data as hourly batches to directories named with the current date. A nightly batch job runs the following code to ingest all data from the previous day as indicated by the date variable:

Assume that the fields customer_id and order_id serve as a composite key to uniquely identify each order.

If the upstream system is known to occasionally produce duplicate entries for a single order hours apart, which statement is correct?

Assume that the fields customer_id and order_id serve as a composite key to uniquely identify each order.

If the upstream system is known to occasionally produce duplicate entries for a single order hours apart, which statement is correct?

- AEach write to the orders table will only contain unique records, and only those records without duplicates in the target table will be written.

- BEach write to the orders table will only contain unique records, but newly written records may have duplicates already present in the target table.Most Voted

- CEach write to the orders table will only contain unique records; if existing records with the same key are present in the target table, these records will be overwritten.

- DEach write to the orders table will only contain unique records; if existing records with the same key are present in the target table, the operation will fail.

- EEach write to the orders table will run deduplication over the union of new and existing records, ensuring no duplicate records are present.

Correct Answer:

B

B

send

light_mode

delete

Question #9

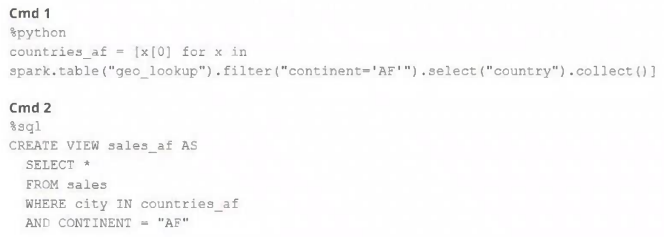

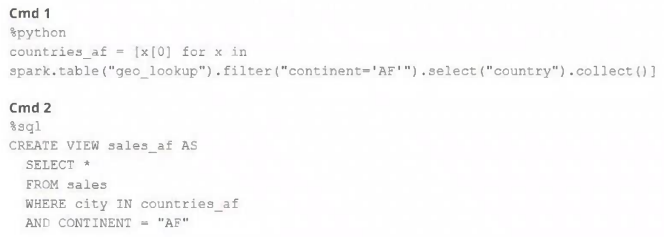

A junior member of the data engineering team is exploring the language interoperability of Databricks notebooks. The intended outcome of the below code is to register a view of all sales that occurred in countries on the continent of Africa that appear in the geo_lookup table.

Before executing the code, running SHOW TABLES on the current database indicates the database contains only two tables: geo_lookup and sales.

Which statement correctly describes the outcome of executing these command cells in order in an interactive notebook?

Before executing the code, running SHOW TABLES on the current database indicates the database contains only two tables: geo_lookup and sales.

Which statement correctly describes the outcome of executing these command cells in order in an interactive notebook?

- ABoth commands will succeed. Executing show tables will show that countries_af and sales_af have been registered as views.

- BCmd 1 will succeed. Cmd 2 will search all accessible databases for a table or view named countries_af: if this entity exists, Cmd 2 will succeed.

- CCmd 1 will succeed and Cmd 2 will fail. countries_af will be a Python variable representing a PySpark DataFrame.

- DBoth commands will fail. No new variables, tables, or views will be created.

- ECmd 1 will succeed and Cmd 2 will fail. countries_af will be a Python variable containing a list of strings.Most Voted

Correct Answer:

E

E

send

light_mode

delete

Question #10

A Delta table of weather records is partitioned by date and has the below schema: date DATE, device_id INT, temp FLOAT, latitude FLOAT, longitude FLOAT

To find all the records from within the Arctic Circle, you execute a query with the below filter: latitude > 66.3

Which statement describes how the Delta engine identifies which files to load?

To find all the records from within the Arctic Circle, you execute a query with the below filter: latitude > 66.3

Which statement describes how the Delta engine identifies which files to load?

- AAll records are cached to an operational database and then the filter is applied

- BThe Parquet file footers are scanned for min and max statistics for the latitude column

- CAll records are cached to attached storage and then the filter is applied

- DThe Delta log is scanned for min and max statistics for the latitude columnMost Voted

- EThe Hive metastore is scanned for min and max statistics for the latitude column

Correct Answer:

D

D

GPT-4o - Answer

GPT-4o - Answer

The correct procedure employed by the Delta engine in identifying the necessary files for processing queries involves analyzing the Parquet file footers. These footers contain metadata including min and max statistics of each column, which are instrumental in efficient data retrieval. Specifically, when a query with a latitude-based filter is executed, these metadata allow the engine to swiftly ascertain which files contain relevant records above the specified latitude threshold, thereby skipping irrelevant files and enhancing query performance. This design is pivotal for optimizing data processing in large datasets, ensuring speed and efficiency in data handling.

send

light_mode

delete

All Pages