Databricks Certified Data Engineer Professional Exam Practice Questions (P. 3)

- Full Access (339 questions)

- One Year of Premium Access

- Access to one million comments

- Seamless ChatGPT Integration

- Ability to download PDF files

- Anki Flashcard files for revision

- No Captcha & No AdSense

- Advanced Exam Configuration

Question #21

A Structured Streaming job deployed to production has been experiencing delays during peak hours of the day. At present, during normal execution, each microbatch of data is processed in less than 3 seconds. During peak hours of the day, execution time for each microbatch becomes very inconsistent, sometimes exceeding 30 seconds. The streaming write is currently configured with a trigger interval of 10 seconds.

Holding all other variables constant and assuming records need to be processed in less than 10 seconds, which adjustment will meet the requirement?

Holding all other variables constant and assuming records need to be processed in less than 10 seconds, which adjustment will meet the requirement?

- ADecrease the trigger interval to 5 seconds; triggering batches more frequently allows idle executors to begin processing the next batch while longer running tasks from previous batches finish.

- BIncrease the trigger interval to 30 seconds; setting the trigger interval near the maximum execution time observed for each batch is always best practice to ensure no records are dropped.

- CThe trigger interval cannot be modified without modifying the checkpoint directory; to maintain the current stream state, increase the number of shuffle partitions to maximize parallelism.

- DUse the trigger once option and configure a Databricks job to execute the query every 10 seconds; this ensures all backlogged records are processed with each batch.

- EDecrease the trigger interval to 5 seconds; triggering batches more frequently may prevent records from backing up and large batches from causing spill.Most Voted

Correct Answer:

E

E

GPT-4o - Answer

GPT-4o - Answer

The option "D" which suggests using the "trigger once" with a 10-second interval job configuration isn't the most effective resolution under the described circumstances. During peak hours, where microbatch processing times exceed the 10-second trigger interval significantly, adjusting the frequency of triggers without addressing the underlying processing capability might not suffice. It's more effective to explore enhancing processing efficiency either through optimization or scaling out the resources, like increasing the number of executors or shuffle partitions to better handle high loads, which might have been hinted at by the alternative answers suggested. Always consider the architecture's ability to handle peak loads when configuring streaming jobs.

send

light_mode

delete

Question #22

Which statement describes Delta Lake Auto Compaction?

- AAn asynchronous job runs after the write completes to detect if files could be further compacted; if yes, an OPTIMIZE job is executed toward a default of 1 GB.

- BBefore a Jobs cluster terminates, OPTIMIZE is executed on all tables modified during the most recent job.

- COptimized writes use logical partitions instead of directory partitions; because partition boundaries are only represented in metadata, fewer small files are written.

- DData is queued in a messaging bus instead of committing data directly to memory; all data is committed from the messaging bus in one batch once the job is complete.

- EAn asynchronous job runs after the write completes to detect if files could be further compacted; if yes, an OPTIMIZE job is executed toward a default of 128 MB.Most Voted

Correct Answer:

E

E

GPT-4o - Answer

GPT-4o - Answer

Delta Lake's Auto Compaction is indeed designed to enhance storage efficiency by reducing small files. After data is written, an asynchronous job detects if the files could be further compacted. If necessary, it executes an OPTIMIZE job, typically targeting 1 GB file sizes. This process ensures optimal read and write efficiencies by managing the distribution of data across larger files. It's critical to note that while some user comments suggest a default file size of 128 MB, the standard practice typically aims for 1 GB, aligning with the provided correct answer of A.

send

light_mode

delete

Question #23

Which statement characterizes the general programming model used by Spark Structured Streaming?

- AStructured Streaming leverages the parallel processing of GPUs to achieve highly parallel data throughput.

- BStructured Streaming is implemented as a messaging bus and is derived from Apache Kafka.

- CStructured Streaming uses specialized hardware and I/O streams to achieve sub-second latency for data transfer.

- DStructured Streaming models new data arriving in a data stream as new rows appended to an unbounded table.Most Voted

- EStructured Streaming relies on a distributed network of nodes that hold incremental state values for cached stages.

Correct Answer:

D

D

GPT-4o - Answer

GPT-4o - Answer

D is indeed the correct choice, as Spark Structured Streaming treats incoming data streams like rows continuously appended to an unbounded table. This model simplifies the conceptual approach to stream processing by allowing developers to use familiar batch processing techniques on streaming data, enabling incremental execution of queries, which are conceptually equivalent to running batch queries on static data. Thus, Structured Streaming provides a unified and efficient framework for handling data that is dynamically evolving, making it a powerful tool for real-time analytics.

send

light_mode

delete

Question #24

Which configuration parameter directly affects the size of a spark-partition upon ingestion of data into Spark?

- Aspark.sql.files.maxPartitionBytesMost Voted

- Bspark.sql.autoBroadcastJoinThreshold

- Cspark.sql.files.openCostInBytes

- Dspark.sql.adaptive.coalescePartitions.minPartitionNum

- Espark.sql.adaptive.advisoryPartitionSizeInBytes

Correct Answer:

A

A

GPT-4o - Answer

GPT-4o - Answer

The parameter "spark.sql.files.maxPartitionBytes" is crucial because it sets the limit of data bytes each Spark partition can hold during data ingestion processes involving file-based sources like Parquet, JSON, and ORC. Effectively, it controls the granularity of data chunking, which can impact both data read performance and shuffle operations in Spark workflows. Understanding and tuning this setting can lead to more optimized Spark applications.

send

light_mode

delete

Question #25

A Spark job is taking longer than expected. Using the Spark UI, a data engineer notes that the Min, Median, and Max Durations for tasks in a particular stage show the minimum and median time to complete a task as roughly the same, but the max duration for a task to be roughly 100 times as long as the minimum.

Which situation is causing increased duration of the overall job?

Which situation is causing increased duration of the overall job?

- ATask queueing resulting from improper thread pool assignment.

- BSpill resulting from attached volume storage being too small.

- CNetwork latency due to some cluster nodes being in different regions from the source data

- DSkew caused by more data being assigned to a subset of spark-partitions.Most Voted

- ECredential validation errors while pulling data from an external system.

Correct Answer:

D

D

send

light_mode

delete

Question #26

Each configuration below is identical to the extent that each cluster has 400 GB total of RAM, 160 total cores and only one Executor per VM.

Given a job with at least one wide transformation, which of the following cluster configurations will result in maximum performance?

Given a job with at least one wide transformation, which of the following cluster configurations will result in maximum performance?

- A• Total VMs; 1

• 400 GB per Executor

• 160 Cores / ExecutorMost Voted - B• Total VMs: 8

• 50 GB per Executor

• 20 Cores / Executor - C• Total VMs: 16

• 25 GB per Executor

• 10 Cores/Executor - D• Total VMs: 4

• 100 GB per Executor

• 40 Cores/Executor - E• Total VMs:2

• 200 GB per Executor

• 80 Cores / Executor

Correct Answer:

C

send

light_mode

delete

Question #27

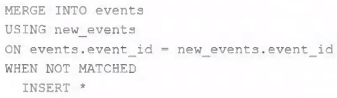

A junior data engineer on your team has implemented the following code block.

The view new_events contains a batch of records with the same schema as the events Delta table. The event_id field serves as a unique key for this table.

When this query is executed, what will happen with new records that have the same event_id as an existing record?

The view new_events contains a batch of records with the same schema as the events Delta table. The event_id field serves as a unique key for this table.

When this query is executed, what will happen with new records that have the same event_id as an existing record?

- AThey are merged.

- BThey are ignored.Most Voted

- CThey are updated.

- DThey are inserted.

- EThey are deleted.

Correct Answer:

B

B

send

light_mode

delete

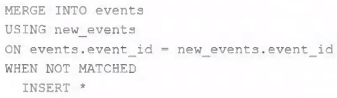

Question #28

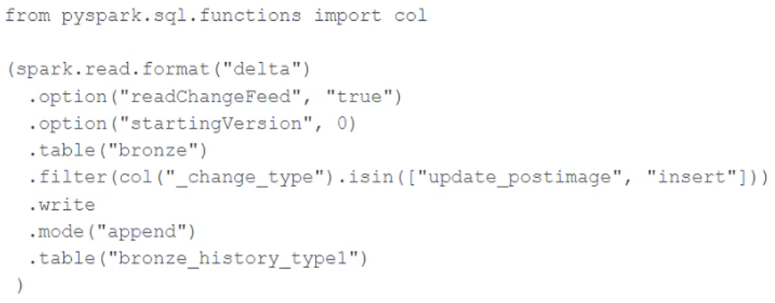

A junior data engineer seeks to leverage Delta Lake's Change Data Feed functionality to create a Type 1 table representing all of the values that have ever been valid for all rows in a bronze table created with the property delta.enableChangeDataFeed = true. They plan to execute the following code as a daily job:

Which statement describes the execution and results of running the above query multiple times?

Which statement describes the execution and results of running the above query multiple times?

- AEach time the job is executed, newly updated records will be merged into the target table, overwriting previous values with the same primary keys.

- BEach time the job is executed, the entire available history of inserted or updated records will be appended to the target table, resulting in many duplicate entries.Most Voted

- CEach time the job is executed, the target table will be overwritten using the entire history of inserted or updated records, giving the desired result.

- DEach time the job is executed, the differences between the original and current versions are calculated; this may result in duplicate entries for some records.

- EEach time the job is executed, only those records that have been inserted or updated since the last execution will be appended to the target table, giving the desired result.

Correct Answer:

B

B

send

light_mode

delete

Question #29

A new data engineer notices that a critical field was omitted from an application that writes its Kafka source to Delta Lake. This happened even though the critical field was in the Kafka source. That field was further missing from data written to dependent, long-term storage. The retention threshold on the Kafka service is seven days. The pipeline has been in production for three months.

Which describes how Delta Lake can help to avoid data loss of this nature in the future?

Which describes how Delta Lake can help to avoid data loss of this nature in the future?

- AThe Delta log and Structured Streaming checkpoints record the full history of the Kafka producer.

- BDelta Lake schema evolution can retroactively calculate the correct value for newly added fields, as long as the data was in the original source.

- CDelta Lake automatically checks that all fields present in the source data are included in the ingestion layer.

- DData can never be permanently dropped or deleted from Delta Lake, so data loss is not possible under any circumstance.

- EIngesting all raw data and metadata from Kafka to a bronze Delta table creates a permanent, replayable history of the data state.Most Voted

Correct Answer:

E

E

send

light_mode

delete

Question #30

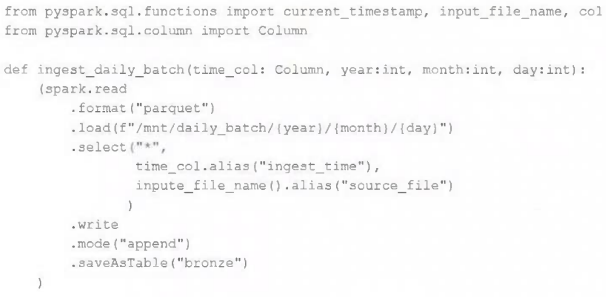

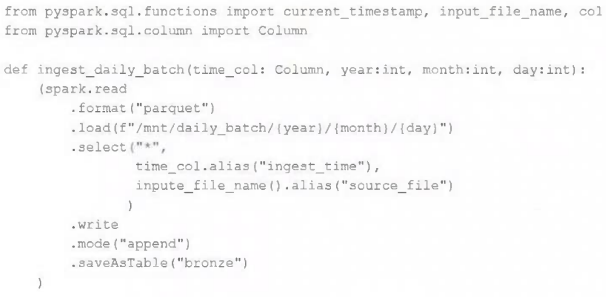

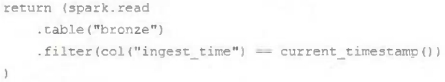

A nightly job ingests data into a Delta Lake table using the following code:

The next step in the pipeline requires a function that returns an object that can be used to manipulate new records that have not yet been processed to the next table in the pipeline.

Which code snippet completes this function definition?

def new_records():

The next step in the pipeline requires a function that returns an object that can be used to manipulate new records that have not yet been processed to the next table in the pipeline.

Which code snippet completes this function definition?

def new_records():

- Areturn spark.readStream.table("bronze")

- Breturn spark.readStream.load("bronze")

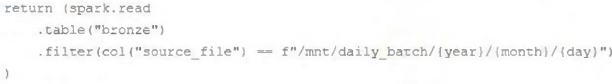

- C

- Dreturn spark.read.option("readChangeFeed", "true").table ("bronze")

- E

Most Voted

Most Voted

Correct Answer:

A

send

light_mode

delete

All Pages